The critical dance with GenAI

Microsoft recently released a study that investigated whether critical thinking of knowledge workers is impacted by using LLMs (Lee et al., 2025). Now if the world was just and perfect, delegating cognitive tasks to machines would free up human mental capacity, but the world is no such place and it is unclear whether human cognition in the workplace is positively or negatively affected by LLM use.

One sign that LLM use might impair the critical thinking skills of workers is a phenomenon named mechanised convergence. This is when knowledge workers with LLMs generate less diverse outcomes than if they had not used the technology (Sarkar, 2023). It could of course be that such convergence is simply the human-machine combo generating less varies, but better responses. However, it could also mean that workers don't really reflect on LLM output and simply follow its suggestions.

In the Microsoft study, the researchers wanted to get a better understanding of how a variety of knowledge workers are using generative artificial intelligence (GenAI, which includes but is not limited to LLM use) and they decided to survey them on the topic. They asked the workers to list three tasks for which they had recently used GenAI, ideally one from each category in the so-called Brachman's ontology (Brachman, El-Ashry & Geyer, 2024):

- Creation (e.g. creating an image or brainstorm an idea)

- Information (e.g. gathering knowledge, analysing data or summarising a text)

- Advice (e.g. checking whether some code is in order, restructuring a text or seeking suggestions for problem-solving)

Critical thinking while using GenAI

Once these tasks were listed, workers were asked to describe them in more detail and to indicate whether they had been thinking critically while performing them. They could use their own judgment on whether something counted as critical thinking or not, and it turned out that for most of them this came down to actively ensuring that the GenAI output was of good quality for the purposes of work.

In practice, that meant critical thinking involved all sorts of cognitive activities. Workers had thought critically about what they meant to achieve with GenAI, about the particular queries they submitted and altered, about whether responses met important objective criteria, about whether the responses seemed feasible, logically coherent and relevant and about ways to verify information – chasing citations or finding external sources to double-check information provided by GenAI. Critical thinking also helped workers integrate GenAI output in their overall workflow – choosing which machine-generated bits to keep and which ones to drop, or revising the output to better serve the purpose of the task.

But critical thinking was not a given! Workers who were confident that GenAI could perform the task at hand, tended to display less critical thinking. The same thing happened if the task was not considered to be very important or complicated. Other drivers of limited critical thinking were time pressure or simply not feeling responsible for the quality of the output. Most importantly, workers did not engage in much critical thinking if they felt that they themselves lacked the expertise or skills necessary to perform the task. Indeed, workers' confidence in their own abilities to perform a task predicted their amount of critical thinking.

Other things that boosted critical thinking were the desire to provide high quality work, to avoid things like submitting dysfunctional code and to cater the output to specific colleagues or clients. Interestingly enough (especially for education), critical thinking was also boosted by the workers' desire to improve their own skills: they critically assessed the output from GenAI to learn from it.

The cognitive efforts of using GenAI

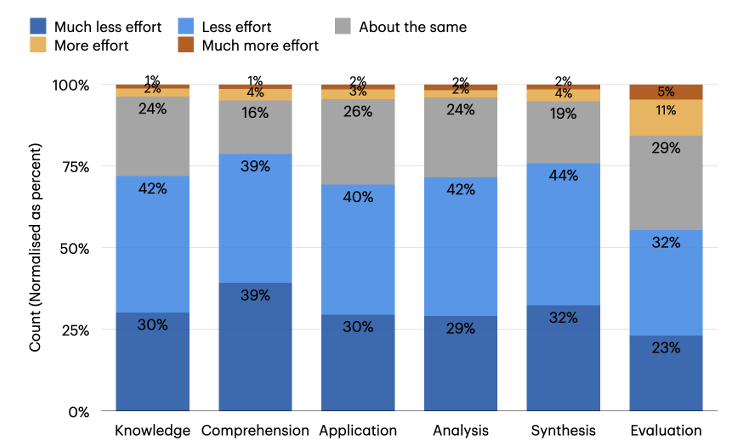

While it's good news that workers see many examples of using critical thinking wile working with GenAI, one might wonder where that leaves the gains of the tool. Are the jobs of knowledge workers easier if they have to remain vigilant about machine output all the time? In the survey, the workers were also asked to comment about the cognitive effort that GenAI use takes. To do so, they were asked to consider effort at each level of Bloom's taxonomy.

The workers reported that obtaining knowledge became easier, but it required the addition of verification. Similarly, while GenAI could smoothen the process of finding solutions to problems, effort increased for integrating such solutions in the an actual workflow or application. What GenAI offered was scaffolding complicated tasks and feedback cycles, but the workers indicate that this requires, as the authors of the study label it, a form of "stewardship", steering and assessing the machine output.

In a way, the knowledge workers become metacognitive agents towards the GenAI, doing quality control on the flow of information that originates from the artificial intelligence. This requires domain expertise, as the negative relation between self-confidence and critical thinking illustrates, but more specifically it requires the skills to steer the GenAI into a productive direction, to fact-check where relevant and to see how the GenAI input can be made relevant to the task at hand. This is a dynamic process, a critical dance of humans and GenAI that depends on workers anticipating, channeling and responding to the moves of the machines.

To perform this dance, workers need new skills, that arguably depend on an increase of critical, evaluative thinking.

References

Brachman, M., El-Ashry, A., Dugan, C., & Geyer, W. (2024, May). How knowledge workers use and want to use LLMs in an enterprise context. In _Extended Abstracts of the CHI Conference on Human Factors in Computing Systems_ (pp. 1-8).

Lee, H. P. H., Sarkar, A., Tankelevitch, L., Drosos, I., Rintel, S., Banks, R., & Wilson, N. (2025). The Impact of Generative AI on Critical Thinking: Self-Reported Reductions in Cognitive Effort and Confidence Effects From a Survey of Knowledge Workers.

Sarkar, A. (2023, June). Exploring perspectives on the impact of artificial intelligence on the creativity of knowledge work: Beyond mechanised plagiarism and stochastic parrots. In Proceedings of the 2nd Annual Meeting of the Symposium on Human-Computer Interaction for Work (pp. 1-17).

Member discussion